As I recall, the IRS agent ended up being a bit apologetic about the whole thing.

- 2 Posts

- 1.47K Comments

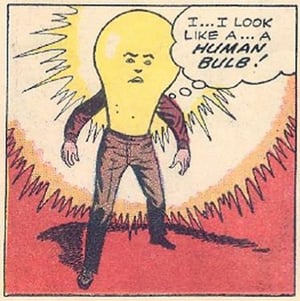

I remember this one. Silver age DC was weird, y’all.

2·1 day ago

2·1 day agoIndeed; it definitely would show some promise. At that point, you’d run into the problem of needing to continually update its weighting and models to account for evolving language, but that’s probably not a completely unsolvable problem.

So maybe “never” is an exaggeration. As currently expressed, though, I think I can probably stand by my assertion.

8·1 day ago

8·1 day agoProven? I don’t think so. I don’t think there’s a way to devise a formal proof around it. But there’s a lot of evidence that, even if it’s technically solvable, we’re nowhere close.

1551·1 day ago

1551·1 day agoWe will never solve the Scunthorpe Problem.

2·2 days ago

2·2 days agoThis adage is also reversible.

LOL it wasn’t words that put people in camps.

No. It was words that dehumanized people, which justified putting them in camps.

if someone’s using slurs today, then it says a lot more about the person using the words than it does about the people the words are referring to.

Yes. It says “you can’t trust me to have your best interests at heart, because I don’t see you as a person.”

and frankly, if what you say is true, then announcing yourself as a credible threat right out in the open makes for a safer situation than keeping the threat hidden, as now we know who to avoid.

Except that the word’s normalization sends a message to the racists that it’s safe to continue dehumanizing that group. That’s the value a racist gets out of it; not “comedy” or “free speech,” but “this is a safe place for your racism to fester in the open.” It allows for groups of them to come together more easily, which gives them even more power. It’s in the best interest of an inclusive society for that comfort to be denied them.

no one but me decides what I’M offended by,

But you’re the only one who can’t decide what you’re harmed by.

and i can tell you i am more pissed off by misinformation, lies, and deceit than i am by any one “offensive” word

Slurs are all of those things, though. They’re an attempt to tie evil things to a group of marginalized people, and thus dehumanize them. That’s slander (misinformation, lies, and deceit, weaponized for harm).

how many people need to be offended by “eastern” for it to be removed?

Zero. It’s not about offense, it’s about harm. Once more marginalized people are harmed by a word’s misuse than are served by its appropriate use, the word needs to be retired.

This isn’t an exact measure, of course. But it’s also illustrative of why the pearl-clutchers are missing the point when they say “why can’t they just have thick skin?”—because it’s not about offense, it’s about harm.

No one is being harmed by the word “boomer,” and boomers as a class aren’t marginalized. They’re just being annoyed that younger people are using it to call them out on the ways they’re not being good citizens.

101·4 days ago

101·4 days agoVotes are anonymous. You can tell who voted, but not what they voted for. It’s crucial for the fairness of elections that a vote cannot be definitively connected to the individual who cast it; if you could, you could coerce or retaliate.

And all of the things you mention are the trust OP is talking about. You were a trusted person in that situation. The process increases and validates trust.

34·5 days ago

34·5 days agowho has been caught cheating on 66% of his wives

FTFY.

Harris gets to have her cake and eat it too, though; she can say she disagrees to give herself a little bit of distance from Joe in the eyes of the moderate right, and at the same time she gets the press bump on the moderate left from her boss saying it. Honestly, it’s probably going to go well for her.

The problem is that they never hear about the stuff MAGA folks do. Fox doesn’t report about it, but they blast Biden’s gaffes from the rooftops, meaning more mainstream news orgs have to cover Biden’s gaffes or risk looking like they’re playing favorites. So the MAGAs never hear about the evil stuff Trump says unless they go looking for it–which they only do if they want to hear it from him anyway.

2·7 days ago

2·7 days agoBoth can be true: it is too expensive, and there’s no money to be made. $840B wouldn’t put a dent in the launch costs for the tens of thousands of rockets we’d need to put into space over the next several decades in order to just get rid of the Pacific Garbage Patch, to say nothing of the rest of the trash on this planet.

And actually, there’s a third true thing: it wouldn’t help much. Having it on Earth isn’t the problem; it’s having it in the oceans that’s the problem. Partially because of the environmental impact, partially because of the biological impact, and partially because we don’t have access to it to reuse it, so we have to keep making more. Once we had it out of the oceans, we could recycle it or even just sequester it away.

3·7 days ago

3·7 days ago-

To get into the sun, we’d probably want to fuel the rockets in space using reaction material mined in space (from the moon or an asteroid). That would more or less eliminate the problem you’re talking about, which is why I kind of skipped over that in my comment. But you’re right; this is one of a million things that makes space travel hard and expensive.

-

We can get up to any speed with enough time and fuel. The trash rockets would just need to get into a solar orbit, and then burn retrograde for a fairly long while. Or if you add a gravity assist in, this is doable today; the Parker Solar Probe got to (and indeed beyond) that speed, for instance. It’s easier and quicker when there aren’t squishy people aboard (we don’t tend to like acceleration much higher than 9.8m/s², for instance).

-

4·8 days ago

4·8 days agoOh, also: I don’t think it’s a stupid question. It’s a fun question. It might not be a workable plan, but I love thinking about this stuff.

21·8 days ago

21·8 days ago-

Just gathering all the trash would be tricky (and, rocket aside, if we could do it easily, we’d probably have done it already; and just put it in a big garbage dump or something). Think about a swimming pool with a bunch of fallen leaves in it; it’s moving around constantly, and if you swim toward one it’ll kind of move away from you or break up when you try to pull it out.

-

Ok, let’s handwave getting the trash out of the ocean. It’s probably a solvable problem. First we need to sort it; all of the recyclables need to stay and be recycled, because we still need that material and because we need to reduce the weight. Compostable stuff can probably also just stay and be composted. Corrosive stuff probably shouldn’t go on a rocket. All of the wet trash (it came from the ocean, it’s all wet) needs to be dried out first; partially because we need the water, and partially because water is really heavy. And once we’ve done all of that…well, trying to figure out something productive to do with that big pile of dry trash is almost certainly going to be cheaper than launching it into space.

-

Ok, let’s handwave that problem too; let’s imagine we’re just going to grab it out of the water, compress it, and get it onto a rocket. Except we’re going to need a whole lot more than one rocket; a decent guess says that we’ve launched 18,003,266 kg into space ever—over our entire history in space—but the Pacific Garbage Patch alone is estimated to be at least 45,000,000 kg, meaning we’d need to launch more than twice the number of rockets we’ve ever launched before. More than 60,000 rockets have been launched since 1957, so that’s substantial. It would take a while; even if we turned the entire space industry’s output toward the project, they’re “only” launching about 1,000 rockets a year nowadays, so it’d take at least 120 years of NASA, SpaceX, Blue Origin, Roscosmos, the ESA, the Chinese Space Agency, etc. doing nothing but trash full-time.

-

Ok fine. Again, we’re handwaving; let’s imagine we have everything loaded up on rockets on the launch pad. Just getting it into orbit is tough for the simple reason that we have to take not just the payload (the trash) but also the fuel we need to get it there, and to get that fuel off the ground we need fuel, and to get that fuel off the ground, we need— you get the picture. The Tsiolkovsky equations govern how much, and thankfully the number isn’t exponential. But we will still need a lot of rocket fuel. Good thing we’re devoting the entire space industry’s output toward this for the next 120 years.

-

Now it’s all in space. Great! That was actually the easy part. We could just leave it in orbit around Earth; that would be a really really bad idea for a lot of reasons (but it’s what we’re already doing with our space junk, so…), and you said “into the sun,” so let’s talk about getting it there. Believe it or not, getting it into the sun is actually way harder than getting it out of the solar system entirely. If you were on a rocket, and you pointed it toward the sun, and you burned and burned and burned and burned until you ran out of fuel, you would counterintuitively end up somewhere out past the Earth’s orbit on the other side of the sun. This is because you have to actually cancel out your (very fast) orbital rotation, which you inherited from the Earth when you launched, before you can get pulled into the sun; otherwise you just end up going around the sun in a very elliptical orbit. It takes a lot of fuel to cancel out Earth’s substantial orbital rotation. So we have to get that up there too.

-

The good news is, once you get it to the sun, you’re good. It won’t cause any noticeable change to the sun (the entire Earth could fall into the sun and it wouldn’t care). And while the trash would initially melt and then burn due to all the heat, smoke is entirely a product of atmosphere and gravity; so no smoke would be generated and it would not make it back to Earth. But once all the ash made it to the sun, it wouldn’t continue burning per se; the sun doesn’t produce heat by burning, but by fusing lighter elements into heavier ones. The Garbage Patch is mostly plastic, so carbon polymers. But the sun isn’t big enough to fuse carbon into magnesium, which means all of those carbon atoms would just kinda…sink into the sun, hanging out under all the hydrogen and helium and lithium and beryllium and boron, but on top of the nitrogen and oxygen and such, for the next ten billion years until the sun turns into a red giant. Then, the sun will expand outward, potentially to engulf the Earth’s orbit; at which point it will reclaim all the atoms of the trash we didn’t send up there.

-

Eventually, after a bunch of different cycles and drama, the constituent atoms of our trash and everything else would become part of the white dwarf that our sun will become; a small, slowly-cooling stellar remnant. After that…we don’t know! The time it takes for a white dwarf to cool completely is longer than the life of the universe so far, so we have to speculate. It’s possible that the remnants of our sun and our trash and everything else might end up becoming a black dwarf, which might look like a shiny spherical mirror the size of the Earth.

All of that seems like a lot of work. I think we should try something else.

-

2·9 days ago

2·9 days agoWhatever the maga hive mind decides over the next couple days.

5·9 days ago

5·9 days agoA lot of someones did remind him of that after Hurricane Maria, when he loudly bragged about getting on the phone with “the president of Puerto Rico” and was subsequently reminded that that was him.

Honestly, that’s probably 90% of the reason he even went to Puerto Rico (and threw paper towel rolls at them).

7·9 days ago

7·9 days agoIt’s even worse: it’s “I never thought the leopards who said they would eat all faces and actually ate several on live TV would ever actually eat any faces,” says person voting for leopards eating faces party because they promised to eat faces.

It’s not really restraining them at the moment.